“Advanced Engineering Research (Rostov-on-Don)” is a peer-reviewed scientific and practical journal. It aims to inform the readers about the latest achievements and prospects in the field of Mechanics, Mechanical Engineering, Computer Science and Computer Technology. The journal is a forum for cooperation between Russian and foreign scientists, contributes to the convergence of the Russian and world scientific and information space.

Priority is given to publications in the field of theoretical and applied mechanics, mechanical engineering and machine science, friction and wear, as well as on methods of control and diagnostics in mechanical engineering, welding production issues. Along with the discussion of global trends in these areas, attention is paid to regional research, including issues of mathematical modeling, numerical methods and software packages, software and mathematical support of computer systems, information technology challenges.

All articles are published in Russian and English and undergo a peer-review procedure.

The journal is included in the List of peer-reviewed scientific editions, in which the main scientific results of dissertations for the degrees of Candidate and Doctor of Science are published (List of the Higher Attestation Commission under the Ministry of Science and Higher Education of the Russian Federation).

The journal covers the following fields of science:

- Theoretical Mechanics, Dynamics of Machines (Engineering Sciences)

- Deformable Solid Mechanics (Engineering Sciences, Physical and Mathematical Sciences)

- Mechanics of Liquid, Gas and Plasma (Engineering Sciences)

- Mathematical Simulation, Numerical Methods and Program Systems (Engineering Sciences)

- System Analysis, Information Management and Processing, Statistics (Engineering Sciences)

- Automation and Control of Technological Processes and Productions (Engineering Sciences)

- Software and Mathematical Support of Machines, Complexes and Computer Networks (Engineering Sciences)

- Computer Modeling and Design Automation (Engineering Sciences, Physical and Mathematical Sciences)

- Computer Science and Information Processes (Engineering Sciences)

- Machine Science (Engineering Sciences)

- Machine Friction and Wear (Engineering Sciences)

- Technology and Equipment of Mechanical and Physicotechnical Processing (Engineering Sciences)

- Engineering Technology (Engineering Sciences)

- Welding, Allied Processes and Technologies (Engineering Sciences)

- Methods and Devices for Monitoring and Diagnostics of Materials, Products, Substances and the Natural Environment (Engineering Sciences)

- Hydraulic Machines, Vacuum, Compressor Equipment, Hydraulic and Pneumatic Systems (Engineering Sciences)

The editorial policy of the journal is based on the traditional ethical principles of Russian scientific periodicals, supports the Code of ethics of scientific publications formulated by the Committee on Publication Ethics (Russia, Moscow), adheres to the ethical standards of editors and publishers, enshrined in the Code of Conduct and Best Practice Guidelines for Journal Editors, Code of Conduct for Journal Publishers, developed by the Committee on Publication Ethics (COPE).

The journal is addressed to those who develop strategic directions for the development of modern science — scientists, graduate students, engineering and technical workers, research staff of institutes, practical teachers.

About the journal

In September 2020, the scientific journal “Vestnik of Don State Technical University” (ISSN 1992-5980) changed its title.

The new title of the journal is “Advanced Engineering Research (Rostov-on-Don)” (eISSN 2687-1653).

The journal “Advanced Engineering Research (Rostov-on-Don)” is registered with the Federal Service for Supervision of Communications, Information Technology and Mass Media on August 7, 2020 (Extract from the register of registered mass media ЭЛ №ФС 77-78854 – electronic edition)

All articles of the journal have DOI index registered in the CrossRef system.

Founder and publisher: Federal State Budgetary Educational Institution of Higher Education "Don State Technical University", Rostov-on-Don, Russian Federation, https://donstu.ru/

ISSN (online) 2687-1653

Year of foundation: 1999.

Frequency: 4 issues per year (March 30, June 30, September 30, December 30).

Distribution: Russian Federation.

The journal "Advanced Engineering Research (Rostov-on-Don)" accepts for publication original articles, studies, review papers, that have not been previously published.

Website: https://www.vestnik-donstu.ru/

Editor-in-Chief: Alexey N. Beskopylny, Dr. Sci. (Engineering), Professor (Rostov-on-Don, Russia).

Languages: Russian, English

Key characteristics: indexing, peer-reviewing.

Licensing history:

The journal uses International Creative Commons Attribution 4.0 (CC BY) license.

Current issue

MECHANICS

An exact analytical solution to the Navier–Stokes equations is derived for the first time, describing Couette flow between permeable plates subject to a quadratic velocity boundary condition. Parametric analysis demonstrates that the linear inhomogeneity coefficient specifies the asymmetry observed in both the velocity and vorticity fields. The quadratic inhomogeneity of the boundary condition controls the nonlinearity of the transverse flow distribution across the channel. Viscosity determines the thickness of the shear layer, governing the transition from a nearly linear velocity profile to pronounced localization of shear near the walls. Numerical simulations demonstrate a reversal in flow direction upon changing the sign of the parameters, accompanied by a twofold variation in velocity gradients. These findings are relevant for flow manipulation in microfluidics, membrane systems, and tribological applications.

Introduction. Flow control in microfluidic systems, membrane technologies, and porous bearings requires an understanding of the synergy between boundary permeability, their spatial inhomogeneity, and the viscosity of the working fluid. Each of these factors is actively studied separately. However, a comprehensive analytical description of their combined effect on the flow is needed. No such publications exist. The presented article fills this gap. Research objectives are as follows: to obtain an analytical solution for the velocity field in Couette flow with permeable boundaries and a nonlinear boundary condition; to study the formation of hydrodynamics under the influence of permeability (α), dynamic viscosity (μ), linear (A) and quadratic (B) inhomogeneity of the boundary condition.

Materials and Methods. The analytical solution is based on the stationary Navier–Stokes equations for an incompressible Newtonian fluid, with a quadratic expansion of velocity along the transverse coordinate. The axial, linear, and quadratic modes of the velocity profile were investigated using numerical modeling in MATLAB. For a stationary, laminar, isothermal flow of a Newtonian viscous incompressible fluid, the distance between permeable plates was h = 1 m. The lower plate was stationary, while the upper plate moved with a velocity of W = 0.3 m/s. The liquid filtration rate was Vw = 0.001 m/s, and μ = 0.01 Pa·s for A = ±0.03 s–1 and B = ±0.005 m–1·s–1. Water, motor oil, and crude oil were studied at temperatures of 20 °C, 40 °C, or 60 °C. For this case, h = 0.02 m, W = 0.05 m/s, A = 0.1 s–1, B = 0.02 m–1·s–1, Vw = 0.0005 m/s. Depending on the fluid and temperature, μ ranged from 0.05 to 9.15·10–3 Pa·s.

Results. Asymmetry of the flow, deviation from the channel axis, and variability of the vorticity amplitude ωy were visualized. Zero filtration velocity was observed at the lower plate in the plane z = 0 and increased with this parameter, reaching a maximum at z = h (distance between the plates). For water, the streamlines exhibited minimal deviation from the horizontal, while for oil at 20 °C, they curved near the upper wall. Two-dimensional vorticity fields for water, oil, and petroleum at various temperatures were compared. Weak ωy and reduced viscosity resulted in negative values ωy for water and petroleum. For oil, the situation was reversed: positive values corresponded to elevated ωy.

Discussion. The calculation results allow us to conclude:

− changing the sign of A inverts the directions of the maxima for velocity and vorticity;

− the sign of B determines the curvature of the isolines;

− the thickness of the layer with the maximum velocity gradient changes by two orders of magnitude when transitioning from water to oil.

The identified patterns are explained by the physical meaning of the parameters: A defines the macroscopic flow asymmetry, B governs the distribution of the transverse flow, and viscosity, through α, controls the depth of boundary perturbations.

Conclusion. For the first time, an exact analytical solution to the stationary Navier–Stokes equations was obtained for generalized Couette flow of a Newtonian fluid between permeable plates with a quadratic velocity profile at the boundary. A parametric analysis has shown that coefficient A determines the asymmetry of the velocity and vorticity fields, while B determines their nonlinearity. Viscosity controls the thickness of the shear layer: for high-viscosity media, the velocity drop is localized near the walls, while for low-viscosity media, the profile is linear. The results provide a foundation for applications in microfluidics, membrane technologies, and tribology. Future prospects are associated with accounting for non-Newtonian fluid properties, unsteady regimes, and flow stability.

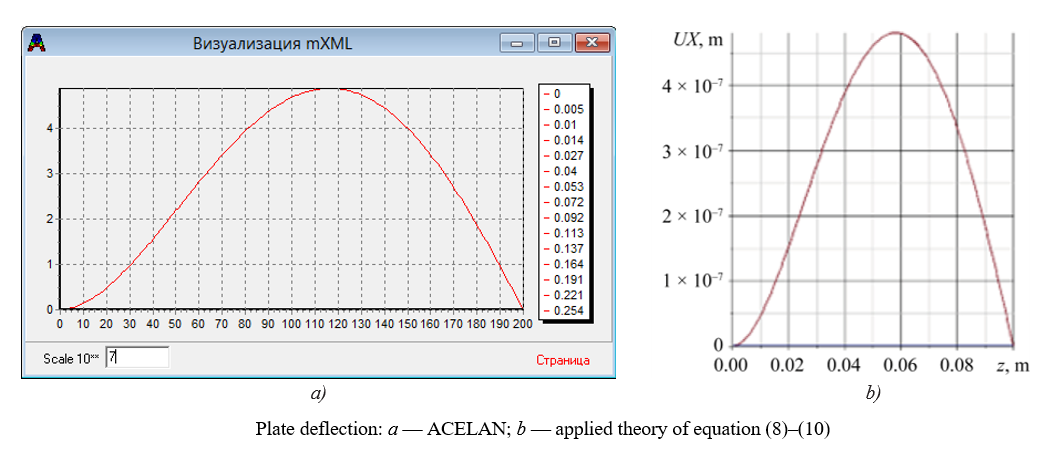

A two-dimensional theory of vibrations of layered piezoelectric plates is developed. The three-dimensional problem is reduced to a two-dimensional model. Porosity of the active elements is taken into account. The error in frequency calculations is no greater than 1%. The results are confirmed by the finite element method. The theory is applicable to the design of transducers and sensors.

Introduction. The development of ultrasonic technology requires the creation of piezoelectric transducers with improved operational and metrological characteristics. One of the most promising directions is the use of composite materials. As shown in the literature, porous piezoceramics possess a unique property: their piezoelectric modulus d33 is practically independent of porosity, whereas the elastic moduli noticeably decrease as porosity increases. This opens up possibilities for the design of high-performance devices, particularly composites with a polymer matrix and porous piezoelectric ceramic rods with axial polarization. However, despite the sufficient study of their static properties, theoretical analysis of the dynamic behavior of such structures, including their simplified two-dimensional models, under bending vibrations and longitudinal polarization, is virtually absent in the scientific literature. In this regard, the objective of the work is to develop a simplified mathematical model for the analysis of bending vibrations of a layered plate of the specified composite and to identify the effect of porosity on its dynamic characteristics.

Materials and Methods. The structure is made of a piezoelectric composite consisting of several layers. Each layer is 1–3 piezoelectric composite, formed by a polymer matrix and porous longitudinally polarized piezoceramic rods. The mathematical formulation of the boundary value problems is performed within the framework of the linear theory of electroelasticity. Based on Kirchhoff-Love hypotheses and assumptions regarding the electric potential distribution, an applied method for calculating steady-state bending vibrations of a layered plate is proposed. The adequacy of the approach is verified through its comparison with the results of finite element modeling implemented in the ACELAN package.

Results. The key outcome of the study was the development and successful testing of an applied theory that reduced the three-dimensional boundary-value problem of electroelasticity for layered piezoelectric elements to a simpler two-dimensional formulation. This significantly reduced calculation time compared to traditional finite element methods while maintaining the required accuracy. To verify the proposed model, numerical testing was performed by comparing it with calculations in the ACELAN software package. The comparative analysis showed almost complete agreement between the results in the low-frequency range, including the precise determination of the first bending mode frequency. The obtained correspondence confirmed the high adequacy and reliability of the developed method, demonstrating its applicability as an efficient tool for the analysis and optimal design of piezoelectric devices.

Discussion. One of the key challenges in the design of layered piezoelectric transducers is the high resource intensity of three-dimensional modeling without transitioning to efficient characteristics, which significantly limits optimization possibilities. The proposed approach, based on reducing the three-dimensional problem to a two-dimensional one, represents a significant step forward in addressing this issue. Its main advantage is the reduction in computational costs and the possibility of using simpler software tools compared to “heavy” CAE packages in numerical analysis. This opens the way to multiple runs, including those employing evolutionary algorithms, in the process of searching for the optimal geometry and structure of the piezoelectric element. Validation of the model based on comparison with calculations in the ACELAN finite element package has shown a high degree of correspondence in the low-frequency region, which confirms its adequacy for practical application. At the same time, the identified limitations related to the frequency range and differences in the elastic properties of the layers outline the boundaries of applicability and set directions for subsequent research.

Conclusion. As a result of the conducted research, an efficient calculation method has been developed and tested. It reduces the three-dimensional boundary value problem of electroelasticity for layered piezoelectric elements to a two-dimensional formulation. The main outcome is a significant acceleration of numerical modeling while maintaining accuracy. It is shown that the proposed theory provides high correctness of results in the low-frequency range, up to the first flexural mode, which has been confirmed by comparison with reference data from finite element analysis in ACELAN. This demonstrates the practical significance of the method as an efficient tool for the iterative search for the optimal design of converters. Prospects are opening up for its application in engineering practice when designing new types of piezoceramic devices, as well as for the further development of applied theory — in the direction of expanding the frequency range and adapting to more complex multilayer structures.

MACHINE BUILDING AND MACHINE SCIENCE

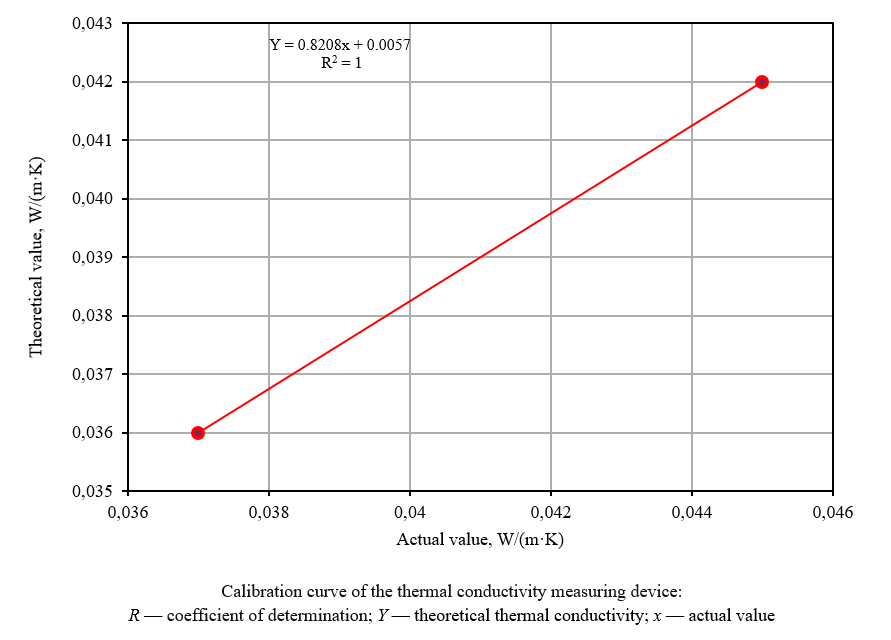

Composites made from recycled tire rubber and polyester are studied. Increasing the rubber content to 50% reduces density by 22% and thermal conductivity by 19%. Water absorption remains minimal, not exceeding 0.5%. High compressive strength allows the composite to be used in loaded structures. The materials can be used as thermal insulation in construction and industry. This work addresses the environmental issue of tire recycling.

Introduction. The disposal of automotive tires typically involves landfilling, stockpiling, or incineration. As a result, this causes soil and atmospheric pollution. Scientists have long and actively discussed the recycling of tire rubber as one of the approaches to solving environmental problems. It is known that the use of rubber crumb in composites can reduce their weight and thermal conductivity. However, materials based on unsaturated polyester containing rubber waste have been insufficiently studied. There are contradictions in the assessment of their mechanical and thermal insulation properties. Moreover, the optimal rubber content in the composite is unknown. The presented work addresses these gaps. The research objectives include the development and analysis of new materials based on unsaturated polyester with justification for the required proportion of rubber waste.

Materials and Methods. During the processing of tire rubber, a multistage grinding process was carried out, followed by magnetic and air separation. A powder with a density of 500 kg/m³ was obtained. The minimum particle size was 0.1 mm, and the maximum was up to 1 mm. The composite matrix was unsaturated polyester with a density of 1160 kg/m³. To fabricate the specimens, 0, 10, 20, 30, 40, and 50% rubber filler were added to it. Stable geometry was achieved through curing at room temperature and subsequent mechanical processing. For each composition, three specimens with an area of 0.021 m² and a thickness of 0.01 m were produced.

Results. The dependence of density, water absorption, and thermal conductivity of the samples on the volume of recycled tire rubber was shown. As its proportion increased, a noticeable decrease in density was recorded: at 0% — 1160 kg/m³; at 10% — 1074.3; at 20% — 1037.2; at 30% — 1017.8; at 40% — 963.7; at 50% — 905. Water absorption dynamics were determined by the weight of the samples after immersion in water. It took more than 8 hours for changes (even minor ones) to occur. The indicator in percentage terms increased from 0.024% to 0.47%, meaning the absolute maximum was <0.5%. As the rubber content increased, thermal conductivity decreased. The value for pure polyester was 0.254854 W/(m·K); for the composite with 10% rubber — 0.2510574; with 20% — 0.245156; with 30% — 0.238484; with 40% — 0.223062; with 50% — 0.207039. All samples withstood a load of 1300 kN.

Discussion. The incorporation of 50% rubber into unsaturated polyester results in a 22% reduction in sample density and a 19% decrease in thermal conductivity, with water absorption remaining under 0.5%. These properties suggest the suitability of the composite as an efficient insulation material, even in environments with elevated humidity. Its high compressive strength (>61.83 MPa) allows for its use in structures subjected to significant loads. Varying the rubber content will provide an optimal balance between mechanical properties and moisture resistance.

Conclusion. This work presents an approach for the sustainable recycling of tires to produce effective insulation materials. Promising directions for future study include investigating composites with larger rubber particles (>1 mm), evaluating their acoustic insulation properties, and assessing their fire resistance and chemical stability.

Heating during turning of metal-composite systems is studied. A measuring unit with wireless data transmission is developed. A regression model of the temperature response is built. Cutting depth has the greatest impact on temperature increase. Safe machining conditions are identified. The results are applicable to the design of turning technologies.

Introduction. Modern technologies of tool and mold production increasingly use metal-composite systems (MCS), which combine additively manufactured metal shells and metal-polymer fillers. This corresponds to priority areas of scientific and technological progress, such as digitalization and additive manufacturing (in accordance with the Federal Project “Development of Materials and Production Technologies” within the framework of the national program “Scientific and Technological Development”). The scope of application of MCS in industry is growing: according to industry reviews, their share in the production of high-precision components for the aerospace and automotive industries has increased by 25–30% over the past five years, providing economic benefits due to a 15–20% reduction in the weight of structures and improvement of the energy efficiency of processes. Such systems combine the strength and thermal conductivity of metal with the damping properties of polymers, yet exhibit high sensitivity to overheating during machining. Consequently, the temperature at the metal–MCPM (metal-polymer composite material) interface during turning may exceed the thermal stability threshold (170 °C), resulting in thermal degradation, loss of adhesion, and shell deformation. In the literature, the problem of MCS thermal stability in turning is addressed only fragmentarily: existing studies focus on monolithic composites or general heat‑transfer models, lacking detailed analysis of interfacial heating in additively manufactured systems featuring low‑conductivity fillers. Therefore, research is needed to quantify the thermal response during the machining of such systems and to determine the cutting parameters that provide their thermal stability. The objective of this work is to experimentally study the temperature response during turning of MCS with a shell thickness of δ = 3.5 mm and to construct a second-order regression model linking the temperature at the metal – MPCM interface with the cutting parameters.

Materials and Methods. A hardware-software measurement unit simulating the MCS structure was developed for the study. It included a replaceable bushing made of 12Kh18N10T steel, an internal insert made of Ferro-Chromium metal-polymer, three built-in type K thermocouples, and a data acquisition module based on an ESP32-WROOM microcontroller with MAX6675 converters, providing temperature recording at 5 Hz and data transmission via Wi-Fi. The accuracy of the measurements was confirmed by thermal imaging verification using FLUKE Ti400. The experiment was conducted according to the full factorial design (FFD) 2³ + n0, in which cutting speed V, feed S and cutting depth t were varied. Data processing was performed by the least-squares method with adequacy validation using Fisher's F-test and coefficient significance by Student's t-test. Based on the results of processing in real physical units, a second-order regression model was constructed — model 3.5TP, designed for engineering prediction.

Results. The analysis of the experimental data showed that the thermal response of the metal–composite system was nonlinear. The depth of cut t was the dominant factor increasing the temperature, whereas within the investigated range, an increase in the feed rate S and cutting speed V led to a decrease in the interface temperature due to a shorter thermal exposure time and more intensive heat removal with the chip flow. The resulting 3.5TP model was characterized by the coefficient of determination R² = 0.9513, Fisher criterion value F = 364.31 and the significance level p < 10⁻⁵, which validated its adequacy. Interpretation of the regression coefficients indicated that the depth of cut (t) had the strongest impact on the temperature rise, the feed rate (S) showed a moderate effect, and the cutting speed (V) had the least sensitivity within the investigated range. The constructed response surfaces and contour maps identified the “safe zones” of cutting conditions that satisfied the constraint T ≤ 170°C, corresponding to the thermal stability limit of the metal–polymer filler. The average deviation between the experimental and calculated data did not exceed 7 °C, that confirmed the high accuracy and predictive capability of the proposed model.

Discussion. The constructed 3.5TP model revealed the relationship between geometric and technology factors that determine the thermal load of the MCS during turning. The dominant impact of the depth of processing was due to the increase in the volume of the cut layer and heat generation in the contact zone, while the increase in feed and cutting speed was accompanied by compensating effects due to a decrease in the time of thermal contact and more intense heat removal with the chips. The results obtained indicated the need to optimize processing modes taking into account the shell thickness δ. Directions for further research were identified.

Conclusion. The conducted study demonstrates that the developed experimental setup reproduces accurately the thermal behavior of a metal–composite system composed of an additively manufactured metal shell and a metal–polymer filler. The constructed 3.5TP regression model adequately describes the temperature response during turning and can be used for engineering prediction of mechanical processing modes.

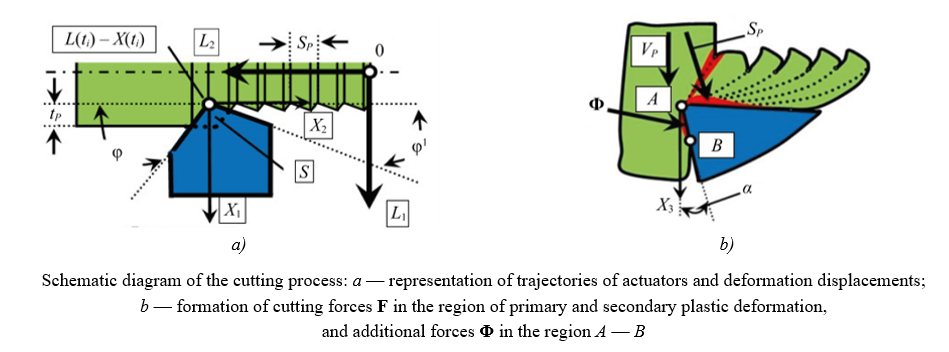

The effect of high-frequency vibrations on tool flank wear is studied. A mathematical model of a dynamic cutting system with vibrations is developed. It is found that vibrations with an amplitude of 10 micrometers reduce wear by half. The optimal amplitude depends on the tool clearance angle and wear stage. The results are applicable for wear prediction and selection of machining modes. The models make it possible to develop tool condition monitoring systems.

Introduction. The wear rate of a cutting tool can be controlled by introducing additional vibrations into the cutting zone. The effect of vibration parameters on tool wear appears to be well-studied. However, the conclusions of some such studies are contradictory. It is noted that vibrations of varying amplitudes can both increase and decrease wear. There are no analytical models in the literature that resolve this contradiction or reflect the nonlinear relationship between the tool and workpiece subsystems under cutting. Furthermore, the fact that wear on different tool faces requires different force interaction models is not taken into account. The present research fills these gaps. The objective of the study is to determine the patterns of impact of high-frequency vibrations (HFV) on tool flank wear.

Materials and Methods. The data from mathematical modeling of the dynamic cutting system in Simulink were used, taking into account the forces on the back face, effective parameters, and the HFV. Equipment: 16K20 machine tool, vibration control measuring stand with a frequency range of 0.4–15000 Hz, computer, E20-10 analog-to-digital converter, acoustic system, and STD.201-1 cutting force testing stand. Workpieces made of 10GN2MFA steel with a diameter of D = 84 mm were machined using tools with brazed T15K6 plates without lubrication.

Results. The effect of the HFV on the contact interaction forces along the tool flank and the phase trajectory of the tool deformation displacements are demonstrated for different HFV amplitudes: from 0.5 ⋅ 10–2 to 2 ⋅ 10–2 mm. It is established that power N of irreversible energy transformations (IET) depends on the direction of the introduced vibrations. The dependence of tool wear rate on additional vibrations with amplitudes of 5 and 10 µm in different directions at cutting speeds of 1 m/s, 1.4 m/s, and 2 m/s is shown. The results obtained are compared with wear trajectories without disturbances.

Discussion. The optimal amplitude of additional vibrations in the feed direction depends on the tool clearance and decreases with wear stage. The maximum wear value drops from 0.55 mm to 0.35 mm when introducing vibrations with an amplitude of 5 µm and to 0.26 mm — at 10 µm. With additional vibrations in the tangential direction, wear rate depends weakly on the amplitude of the introduced vibrations, as it is many times smaller than the velocity of the tool vibrational displacements. The maximum wear value decreases from 0.65 mm to 0.6 mm at 5 µm and to 0.48 mm — at 10 µm. With increased wear, there is no optimal amplitude for additional vibrations.

Conclusion. The developed models allow for a quantitative assessment of the impact of HFV on the tool flank wear rate and the appropriate selection of vibration parameters introduced into the cutting zone. This allows for the creation of:

- virtual models of the cutting process and the selection of modes to minimize wear rate;

- wear monitoring systems with a comprehensive approach to prediction.

Next, it is required to study the dynamics of the cutting process at HFV amplitudes greater than 10–15 µm.

INFORMATION TECHNOLOGY, COMPUTER SCIENCE AND MANAGEMENT

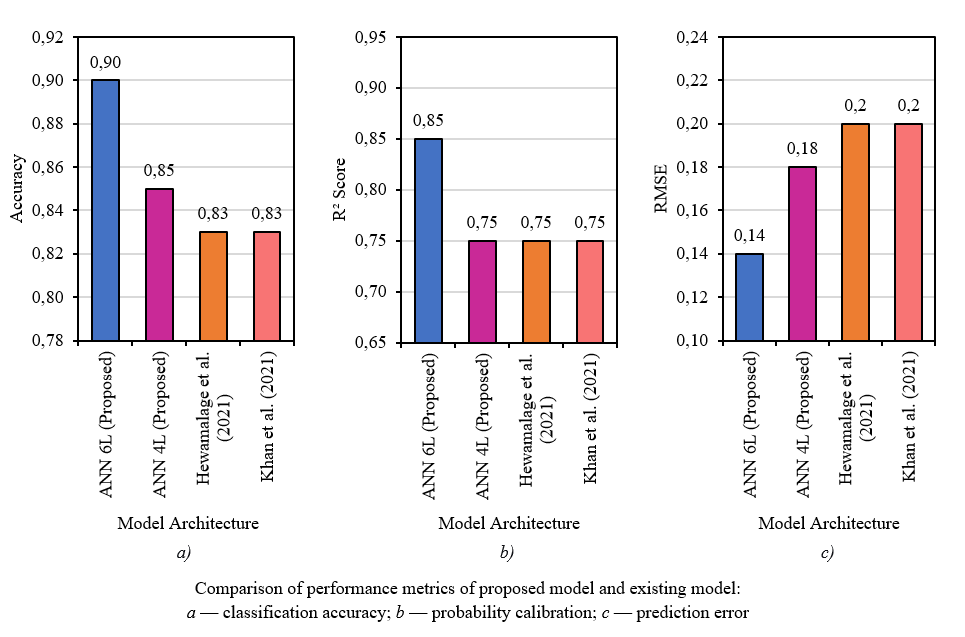

A new CVLV framework for customer churn prediction is proposed. It combines deep learning models with customer lifetime value (CLV). A two-layer RNN achieves high accuracy for high-value customers. A three-layer ANN ensures better prediction stability. The framework enables strategic allocation of retention efforts. The results are applicable to telecommunications, banking, and retail.

Introduction. Customer churn prediction represents a challenge in the current era of rapid digital transformation, hyper-competition, and data-driven marketing. In sectors such as telecommunications and banking, even marginal reductions in churn translate to significant revenue protection. Numerous companies employ uniform approaches, leading to the inefficient allocation of marketing resources and loss of loyal customers. Recent research has advanced along two largely separate domains. The first focuses on improving predictive accuracy through machine learning and deep learning techniques. Another stream, rooted in marketing science, emphasizes the economic dimension of churn, introducing Customer Lifetime Value (CLV) as a key metric. Existing solutions either maximize accuracy at high computational cost or discuss value-based strategy without providing a technical, implementable system. To bridge this gap, this paper aims to create, test, and present a comprehensive churn control system integrating customer lifetime value framework (CVLV). To achieve this, the following tasks are addressed: segmenting customers based on dynamic CLV and churn risk scores; evaluating the efficiency of various neural network configurations; and building a decision model that assigns optimal deep learning architectures for targeted retention, seamlessly integrating data analytics with corporate strategy.

Materials and Methods.The study was performed on two datasets: IBM Telco Customer Churn (7,043 customers, 21 features, binary churn) and Santander Customer Transaction Prediction (200,000 records, 200 numerical features, binary target variable). The data were preprocessed to address class imbalance and split 70-15-15 (train-validation-test) using 5-fold cross-validation. ANN (3–6 layers) and RNN/LSTM models were compared within the CVLV framework. The training utilized Adam optimizer, L2 regularization, dropout, early stopping, gradient clipping, and uniform batch size and epoch settings. The performance was evaluated based on accuracy, loss, and the Pareto frontier. Subsequently, customers were segmented by CLV/risk level, and retention strategies were assigned to the respective optimal models.

Results. The comprehensive assessment of artificial neural networks (ANN) and recurrent neural networks (RNN) shows that RNN with 2 layers achieved marginally higher accuracy of 0.90, while the 3-layer ANN produced the best robustness with a loss of 0.25 with relatively similar predictive performance. With the CVLV framework, RNN 2L is assigned for high value, high risk relationships that need the most precision, ANN 3L is assigned for stable, high value relationships, and general RNN for low value customers.

Discussion. This work has shown that the CVLV framework strategically optimizes churn prediction by aligning deep learning models with customer value-risk profiles. The data obtained confirm that ANN 3L provides optimal robustness while RNN 2L achieves superior accuracy for temporal patterns, together enabling more efficient and targeted retention interventions across industries. This approach can be deployed across the telecommunications, banking and retail sectors and facilitate a meaningful connection between technical model performance and strategic decision-making, enabling organizations to deploy retention efforts effectively by aligning model capability with the customer's value and probability of churn. The findings indicates that strategic model assignments based on CLV-risk profiles led to improved efficiencies associated with retention without compromising predictive reliability.

Conclusion. The main results are that the ANN 3L model provides the optimal balance of accuracy (0.875) and robustness (loss: 0.25) for churn prediction, while the RNN 2L achieves peak accuracy (0.90) for high-risk segments. The practical significance lies in the proposed CVLV framework, which enables businesses to strategically align deep learning model selection with customer lifetime value, improving retention efficiency. Further research will focus on integrating real-time CLV updates and validating the framework across additional industry domains.

A new algorithm for processing optical flows under noise conditions with unknown performance has been developed. The method is based on minimax stochastic estimation and parametric identification of linear system regions. The algorithm provides high accuracy with low computational costs. Numerical simulations confirm errors of a few percent, even with high noise levels. The results are applicable to machine vision systems, unmanned navigation, and space exploration.

Introduction. Methods for processing information contained in variations in the optical flow intensity during object motion are widely used in numerous technical applications, including space research, technical diagnostics, machine (technical) vision, object tracking in digital images, autonomous navigation of unmanned vehicles, etc. Among these methods, monocular techniques for estimating the parameters of the video camera own motion have demonstrated the greatest practical efficiency, both based on a cartographic analysis of the underlying surface and using various algorithms for estimating optical flow (velocity field) parameters. Although existing velocity field estimation algorithms offer advantages such as operability in the absence of terrain maps and computational costs easily implemented in onboard processors, their practical application is significantly complicated by the inevitable noise in optical measurements, which can have a wide variety of physical origins and reduce dramatically the accuracy of optical flow parameter estimation. Therefore, the objective of the research discussed in this paper is to address the problem of simultaneous high-precision estimation of the intensity of optical flow and identification of its parameters under conditions of measurement noise with unknown probabilistic characteristics. A theoretical solution to this problem enables the development of a new general approach to the synthesis of robust algorithms for high-precision optical flow processing in video monitoring systems. This, when applied in practice, will ensure noise immunity and the required accuracy characteristics for machine vision systems, autonomous navigation systems of unmanned vehicles, and other applications.

Materials and Methods. The solution was obtained by monocular methods for determining the camera proper motion and minimizing a regularized quadratic criterion. The starting-point for the solution was the formulation of the problem as one of stochastic estimation and parametric identification of a discrete linear nonstationary system while observing its state vector under interference with an unknown probability distribution. The synthesis of the “estimation-identification” algorithm in this formulation was implemented as a procedure that guaranteed the highest accuracy in the minimax sense. Minimizing the resulting minimax criterion allowed us to construct an algorithm for estimating and parametrically identifying the optical flow as a stable vector recurrent procedure, easily implemented in onboard computers of moving objects.

Results. The problem of simultaneous high-precision estimation of the optical flow intensity and identification of its parameters under measurement noise with unknown probabilistic characteristics is solved. This problem has not been considered in the scientific literature to date. The solution will enable the development of an approach to the synthesis of robust algorithms for high-precision processing of optical flows in video monitoring system. In practical use, it will ensure noise immunity and the required accuracy characteristics of machine vision systems, autonomous navigation systems of unmanned vehicles, etc. The assessment of the practical applicability of the developed algorithm for estimating and identifying optical flow parameters was performed under conditions of non-Gaussian measurement noise through numerical simulation. Despite the specified high level of measurement noise, the errors in estimating the optical flow intensity at all tested coordinate points proved to be both rapidly converging to steady-state values and very small in the steady-state mode (a few percent of the maximum value of the optical flow intensity).

Discussion. The obtained data confirm that the proposed algorithm has such advantages over known optical flow processing algorithms as the ability to estimate the intensity of the optical flow and identify its parameters under noise conditions, whose probability distributions are a priori unknown. It is characterized by high accuracy and robustness, and it does not require high computational costs.

Conclusion. The practical significance of the developed algorithm consists, firstly, in the possibility of high-precision stable processing of the optical flow under the conditions of uncertain probabilistic nature of measurement errors, and secondly, in the computational efficiency of the developed “estimation-identification” procedure. This, in turn, provides its successful practical application in solving optical information processing problems in vision systems, navigation of autonomous robotic systems, space exploration, technical diagnostics, and other fields.

Data on wearable sensors for Parkinson's disease are systematized. Nine devices for monitoring motor symptoms are analyzed. Two concepts are identified: minimalism and real-time detection. A minimum validation set for each class of tasks is proposed. Conditions for unifying protocols and accuracy criteria are defined. The results are applicable to personalized diagnostics and therapy.

Introduction. Parkinson's disease (PD) requires objective and continuous monitoring of motor symptoms. Wearable sensors are a promising tool for improving diagnostic accuracy and monitoring disease dynamics. However, they are underutilized in clinical practice due to the lack of uniform standards and limited data reproducibility. The presented study fills this gap. The objectives of the research are to analyze current approaches to the use of wearable systems for monitoring motor symptoms of PD, identify limitations (including those related to validation standards), and determine ways to overcome them for the efficient use of sensors in clinical practice.

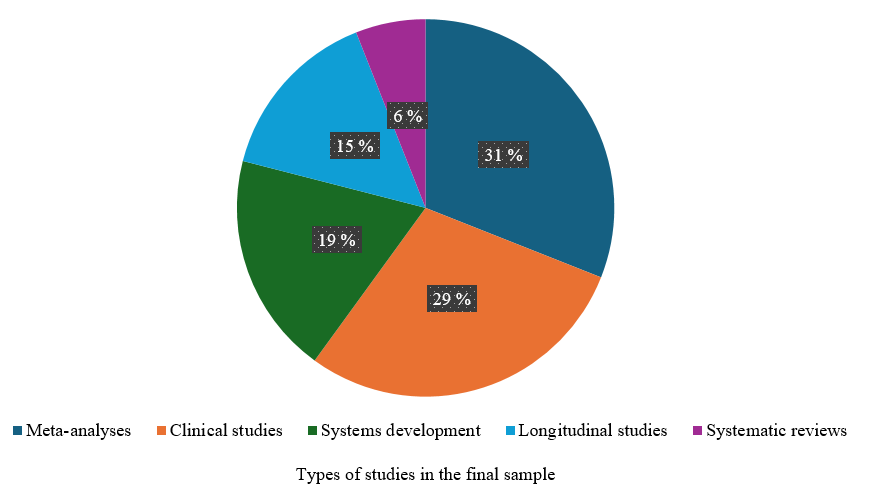

Materials and Methods. Using the Prisma 2020 methodology, a literature search was conducted for the years 2020–2025 in PubMed, Scopus, Web of Science, and Elibrary.ru. Peer-reviewed studies on the development, validation, and application of wearable devices for assessing gait, tremor, bradykinesia, and dyskinesia were examined. Nine key terms in digital medicine and neurodiagnostics in Russian and English were used for the search: “Parkinson's disease”, “digital biomarkers”, “wearable devices”, and others. The final sample of 48 studies was dominated by meta-analytics (31%) and clinical studies (29%). Nineteen percent of the sources discussed the development of monitoring systems, 15% were longitudinal studies, and 6% were systematic reviews.

Results. Descriptions of nine wearable devices for monitoring motor performance in patients with PD were compared. The types of metrics, clinical scenarios, and tasks were considered. Two concepts of the devices under study were outlined:

- minimalism (one sensor, high comfort level, focus on integral indices);

- real-time detection (emphasis on episodes and instant recognition).

These two cases required different labeling standards, analysis windows, and clinical significance criteria. To improve the comparability of results, a “minimum validation set” specific to the class of problems was needed. Conditions for overcoming these contradictions were:

- unification of data collection protocols, metric sets, and indicators;

- external and multicenter validation (labeling, accuracy criteria);

- algorithm stability to device changes;

- clinical utility criteria.

Discussion. The widespread use of wearable devices for analyzing motor symptoms in patients with PD is hampered by a lack of analytical and clinical validation standards and economic ambiguity of implementation. In general, five of the devices reviewed show promise. However, clinical data on their efficiency and impact on quality of life are insufficient, as research is primarily focused on the potential of the concepts (accuracy of the algorithms) rather than the practical value and readiness for everyday use of real devices. There is little research on external (multicenter) transferability, unified endpoints, and clinical utility.

Conclusion. Current data on the capabilities and limitations of wearable sensors for Parkinson's disease has been systematized. Widespread adoption of such devices is impossible without standardization and unified criteria for efficiency, safety, and economic viability. Addressing these identified challenges will transform approaches to diagnosis and treatment, making wearable systems a key tool in personalized medicine.

General principles of the electronic structure of chalcogenides, halides, and oxides are studied using quantum-mechanical methods. The top of the valence band is formed by the p-states of electronegative elements. The bottom of the valence band is formed by the s-states of these same elements. Calculations have revealed fine structure details invisible in experiments. The results are applicable to modeling optoelectronic materials. The approach allows predicting the properties of new compounds of a given composition.

Introduction. Modern quantum and optoelectronics, as well as nonlinear optics, place high demands on the physical and chemical properties of the materials used. This necessitates, among other things, the search for new materials that possess the properties required for a given application. At the same time, this approach can complicate the composition and crystal structure of the resulting compounds. The electronic structure of complex compounds determines their electrical, optical, magnetic, and chemical properties. These properties are unique to each compound. However, it is known that different compounds that are similar in some important parameters, for example isoelectronic ones, exhibit similarities in the structure of their electronic shells. The accumulation of such information on individual compounds and their groups necessitates generalizing the data obtained. The research objective is to consider some general characteristics of the electronic structure exhibited by groups of different compounds (chalcogenides, halides, and oxides).

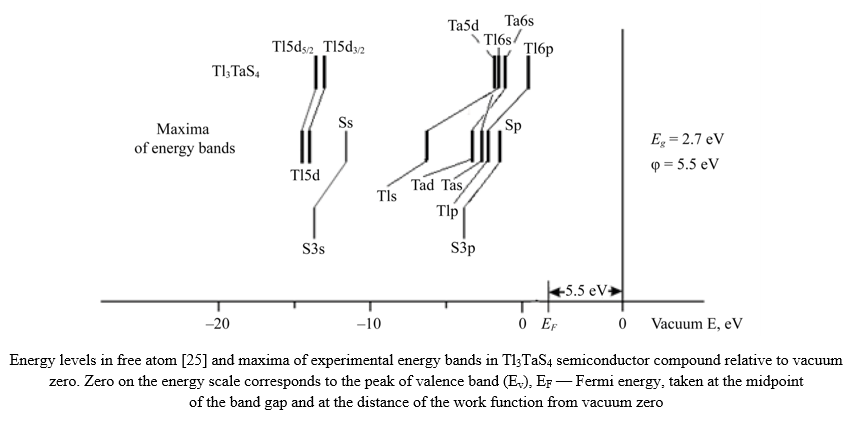

Materials and Methods. The subject of study was three groups of compounds: chalcogenides Tl3TaS4, Tl3PS4, Sn2P2S6, InPS4, Cu2CdGeS4, Ag2CdSnS4, Ag2HgSnS4, halides Cs2HgX4 (X = Cl, Br, I), group APb2Br5 (A = K, Rb), and oxides La2Zr2O7, Nd2Zr2O7, Sm2Zr2O7, Eu2Zr2O7, Gd2Zr2O7. The research method involved quantum-mechanical calculations within the framework of density functional theory with various exchange-correlation potentials. Potentials were used that allowed for strong correlations between d- and f-electrons and yield a band gap value close to the experimental value.

Results. Quantum-mechanical calculations of the electronic state densities and optical characteristics of a number of chalcogenides, halides, and oxides were performed. Partial and total electron densities of states (DOS) were presented. The total density of states was compared with experimental X-ray photoelectron spectra (XPS). The validity of the calculation results was confirmed. The top of the valence band was formed by the p-states of the most electronegative elements (S, Se, Te, Br, O), whereas the bottom of the valence band was formed by the s-states of these same electronegative elements.

Discussion. Based on the calculations, general conclusions were drawn regarding the similarities in the valence band structure of the compounds considered. Using the compound Tl3TaS4 as an example, it was shown that in a solid, compared to the energies in a free atom, the binding energy of the levels for electronegative elements was significantly reduced, while for electropositive elements, it was increased. A rare-earth element (using Eu2Zr2O7 as an example) significantly altered the electron-energy structure, such that the electron states of the rare-earth element (4f-, 5p-) and the 5s-states of europium (Eu) altered the structure of the valence band of pyrochlore (Eu2Zr2O7). The calculated total and partial DOS were compared with experimental X-ray and X-ray photoelectron spectra, which confirmed the accuracy of the calculations. However, the calculated DOS curves contained numerous fine-structure elements that were obscured by instrumental distortion in the experimental curves. Thus, the calculation complemented the experiment very well, providing a more detailed picture of the electron-energy structure of the studied compounds.

Conclusion. The research objective was achieved: some general characteristics of the electronic structure exhibited by groups of different compounds (chalcogenides, halides, and oxides) were examined. The problems of identifying the states that determined the features of the electronic structure and optical characteristics of the studied groups of compounds were solved. This research can be used in the modeling of new materials with desired properties.

A mathematical model of a convective dehydrator for drying food products is developed. The model takes into account air circulation and heat loss through the chamber walls. Experimental testing has shown a temperature calculation accuracy of less than 0.5 degrees. An air circulation coefficient exceeding ten cycles is determined. The results are applicable to optimizing energy consumption in industrial drying. The model is used in the design of household and industrial dehydrators.

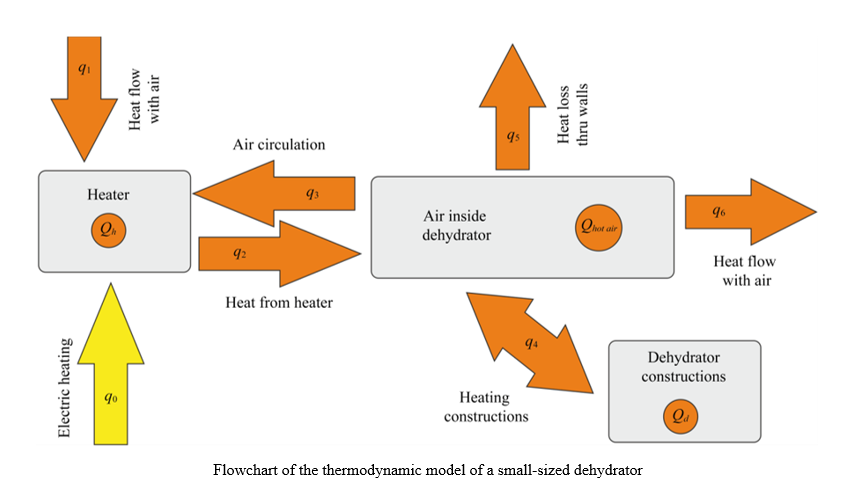

Introduction.Convective drying of various types of food raw materials is one of the most common methods of canning. Over three million tons of dried fruits alone are preserved worldwide each year, and the volume continues to grow. Due to the duration and energy consumption of the process, when almost 50% of energy is spent directly on removing moisture, optimizing drying is a challenge. Targeted and reasonable optimization can be performed only if there is a common mathematical model of equipment and drying processes. However, when modeling the drying process, as a rule, a mathematical model of the equipment is not used, which makes the results obtained limited in application. This is the knowledge gap that the proposed study is designed to eliminate. The article presents the results of the development and identification of the parameters of a mathematical model of a small-sized dehydrator used as an experimental installation for the study on food drying processes. The research objective is to develop a mathematical model of the thermal subsystem of a dehydrator that takes into account the processes of heat and mass transfer. To achieve this goal, the following tasks must be solved: to analyze the design of the dehydrator and take into account the effect of the control system; to build a mathematical model of the dehydrator in the form of an ordinary differential equation (ODE) system; to develop a simulation model of the dehydrator in the MATLAB/Simulink package; to conduct experimental studies to obtain data on temperature and power consumption; to identify the parameters of the mathematical model, including the amount of air flow and the circulation coefficient; to verify the obtained model through comparing the results of simulation and experiment.

Materials and Methods. A small-sized convective dehydrator equipped with an original microprocessor control system was used as a modeling object. This system was designed to provide a preset temperature regime and collect data on the parameters of the drying process: temperature, humidity, air pressure, and others. The system used three sensors: two

BME-280 sensors and one DS18B20 sensor. Telemetry data and control commands were transmitted via a bot on the Telegram platform. The mathematical model of the dehydrator was constructed in the class of ODEs by the method of accumulators and flows. The parameters of the mathematical model were identified both by direct measurements of the structural elements of the dehydrator and using data obtained during experimental studies. The least squares method (LSM) was used for parametric identification of the model. The calculations were performed in the MATLAB software package.

Results. A mathematical model of thermal processes in a dehydrator has been developed in the form of a system of ordinary nonlinear differential equations of the third order. The model takes into account both the air flow coming out of the dehydrator and the air circulation inside it. The total coefficient of heat loss through the walls of the dehydrator is also determined, and its dependence on the temperature difference inside and outside the installation is shown. The developed model is presented both analytically and as a model in the MATLAB/Simulink system. The experimental verification of the model has shown high accuracy: the maximum deviation of the calculated temperatures from the measured ones was less than 0.5°C. The identification method has determined the key parameters of the system: the volume flow of air through the heater (14.1 l/s), and the air circulation coefficient (11.3), which indicates a more than tenfold passage of air flow through the working chamber. It has been found that intensive circulation significantly speeds up the drying process compared to natural convection. The model provides physical interpretability of the parameters and requires a minimum amount of experimental data.

Discussion.The developed mathematical model of the dehydrator based on ordinary differential equations showed high accuracy (error less than 0.5°C) in the operating temperature range. The proposed energy approach made it possible to identify the volumetric air flow (3.1 l/s) and the circulation coefficient (α = 10.2), which cannot be measured directly. It is established that the air performs more than 10 cycles inside the chamber before exiting, which significantly intensifies heat and mass transfer. The coefficient of heat transfer through the walls depends linearly on the temperature difference, which is consistent with the theory of natural convection. Unlike empirical and neural network models, the proposed approach requires less experimental data and provides physical interpretability of the parameters. The model creates the basis for optimizing food drying processes.

Conclusion. The developed and experimentally verified mathematical model of the thermal subsystem of a small-sized convective dehydrator provides measurement accuracy and allows for the identification of hard-to-reach parameters: volumetric air flow rate and circulation coefficient. The research results can serve as the basis for developing a comprehensive model of the food dehydration process and optimizing the device operating modes. The model is applicable to the design and improvement of domestic dehydrators.